AI drew up a Justification Sheet for the Deadly Strike on the School Building. But AI did not know school kids were inside. And apparently Human Verification was not done?

The reports regarding the March 2, 2026, strike on the Shajareh Tayyebeh school in Minab, Iran, suggest a complex interplay between AI systems and human oversight.

Based on the investigation by Military Times and Congressional inquiries, here is the breakdown of AI’s involvement:

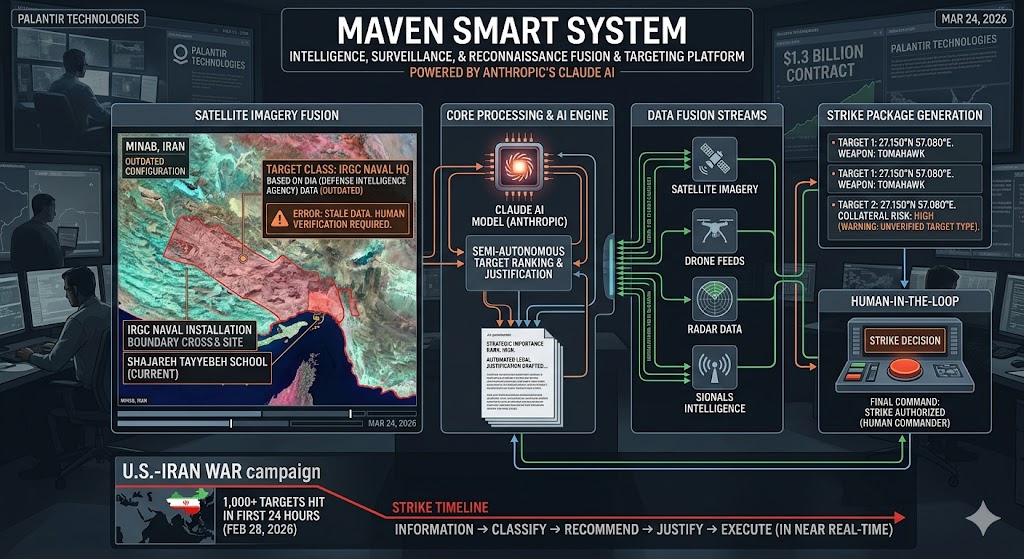

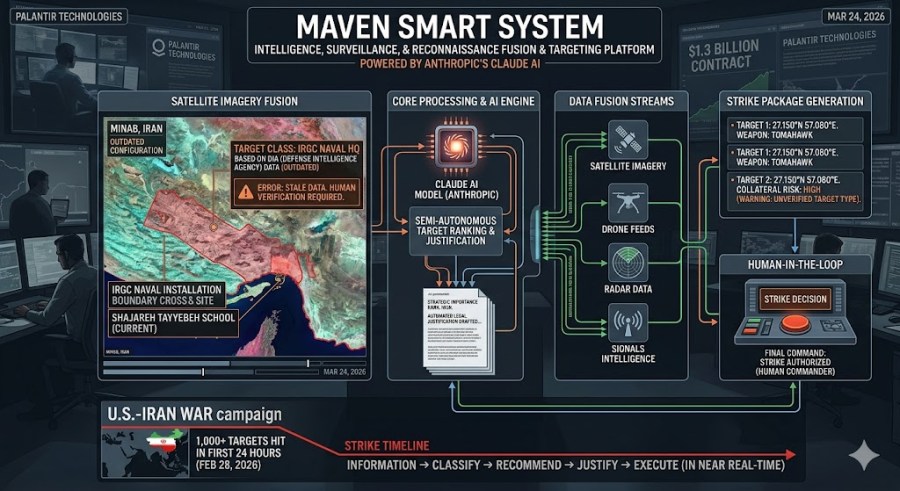

1. The Role of the “Maven Smart System”

The Pentagon utilized the Maven Smart System (a $1.3 billion platform developed with Palantir) to manage the massive scale of the Iran campaign.

- Target Generation: Maven was used to fuse satellite imagery, radar, and signals intelligence to generate strike coordinates. It reportedly enabled the U.S. to hit over 1,000 targets in the first 24 hours of the war.

- AI Integration: The system uses Anthropic’s Claude AI model to rank targets by strategic importance and draft automated legal justifications for strikes.

2. The Cause of the Failure: “Confidently Wrong”

While AI generated the coordinates, experts and former military officials suggest the tragedy was a data and oversight failure rather than a “glitch” in the algorithm itself:

- Outdated Intelligence: The AI was fed human-curated data from the Defense Intelligence Agency (DIA) that had not been updated since the school was built. The site was previously part of an IRGC naval compound.

- The “Human Link”: Investigation indicates that human analysts failed to verify the AI-generated target against current satellite imagery or social media data (which showed the active school).

- Accuracy Gaps: Internal Pentagon data notes that Maven’s identification accuracy is roughly 60%, which can drop below 30% in poor visibility—significantly lower than human analysts (84%).

3. Current Controversy

The strike has sparked intense debate in Washington regarding the “kill chain”:

- Staffing Cuts: Lawmakers have pointed to recent 90% staff cuts at the Civilian Protection Center of Excellence as a reason why the AI’s output wasn’t properly vetted.

- Accountability: Former military officials, including Lt. Gen. Karen Gibson, maintain that regardless of the software used, the human commander is ultimately responsible for the lethal outcome.

Summary: AI (Maven/Claude) was directly involved in identifying the location and drafting the justification for the strike, but the catastrophe was caused by the system being fed stale data and a lack of human verification before the missile was launched.

Would you like me to look into the Congressional response to the Pentagon’s use of the Maven system?

You must be logged in to post a comment.